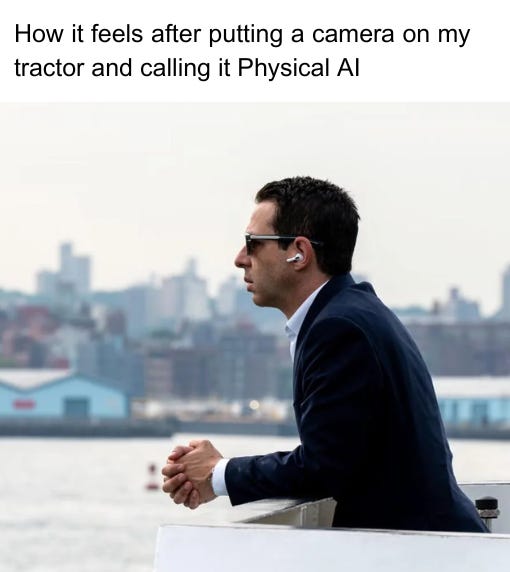

‘Physical AI’ in Agriculture: Real Technical Shift or Just a New Label for Ag Robotics?

It's partly marketing. But underneath the label is a real architectural shift in how robotic intelligence is built and deployed.

Hey folks!

In Issue #140 of Better Bioeconomy, I want to talk about a term that I have been coming across frequently lately: Physical AI.

Tractor companies, laser weeding startups, and drone platforms are all using it. At CES 2025, NVIDIA’s Jensen Huang described Physical AI as “the next frontier of AI,” and the phrase spread from there with the momentum that only a newly mainstream tech label can generate.

Every few years, the vocabulary in this agrifood tech space refreshes. From “precision agriculture” to “smart farming” to “ag robotics” and now “physical AI.” Some of those rebrands tracked real changes in what was happening on the ground, but some were mostly new dressing on a familiar pitch.

So which is this one? The answer, after reading into the underlying technology and watching which companies are doing what: partly both. Some of it is marketing. But underneath the label is a real architectural shift in how robotic intelligence is built and deployed. Recognising that shift helps me understand which developments in the space deserve attention right now.

Let’s dig in!

The previous wave of ag robots shared a structural problem that engineering alone couldn’t fix

The first thing to understand about Physical AI in agriculture is that it comes after a decade of promises that mostly didn’t deliver. “Wave 2” ag robotics, during 2015-2022, was built around a plausible-sounding bet: attach a computer vision model to a robot arm, and it can pick fruit, identify weeds, and scout for disease. Many companies launched on this premise. Several raised significant capital. Most either failed outright or remain stuck searching for commercial scale.

The failures get attributed to various causes in post-mortems: difficult outdoor conditions, high hardware costs, farmer scepticism, pandemic disruption, and poor funding timing. Hardware economics were a real constraint. So was the fundamental difficulty of dexterous manipulation in unstructured outdoor environments, a mechanical challenge that still needs work in applications like harvesting today.

These all played a role. But reading across the category, another constraint shows up repeatedly alongside them: the intelligence itself was often narrow and hard to transfer.

A strawberry-harvesting robot trained in one greenhouse did not necessarily transfer well to a different layout, variety, or growing region. An apple-picking robot optimised for one orchard geometry couldn’t adapt to a new farm configuration without restarting the data collection and model training process. Every new application was, in effect, a cold start.

If a system has to be heavily retrained or reconfigured every time the crop, geography, or workflow changes, its commercial scope narrows quickly. Layer on seasonal data collection constraints (you get one shot per year at harvesting strawberries), hardware costs that haven’t yet benefited from scale, and maintenance complexity in outdoor conditions, and the commercial window becomes much tighter than early narratives suggested.

This is not to say that transferability was the only thing that broke the previous wave. It was that narrow, non-transferable intelligence that appears to have been one of the recurring structural constraints that made all the other problems harder to overcome.

Physical AI stacks three technologies, and the VLA layer is the architectural shift

As mentioned at the start, the term “Physical AI” was popularised by NVIDIA’s Jensen Huang at CES 2025, where it served as the organising concept for NVIDIA’s push into robotics hardware: the Isaac platform, the Jetson edge computing chips, and the model called Cosmos.

Physical AI, as NVIDIA defines it, means AI that doesn’t just process information but perceives the physical world through sensors and acts in it through motors and actuators. The distinction from software AI is the closed loop between perception and physical action: a system that reads the scene and then moves, rather than reading the scene and producing text or a label.

That loop is built from three layers of technology that have been developing in parallel.

1. Foundation model

Foundation models are large AI systems pre-trained on enormous datasets, general enough to be adapted to a wide range of tasks without retraining from scratch. GPT, Gemini, and LLaMA are foundation models for language and multimodal understanding.

The core property is transferability. A foundation model trained on broad data can be fine-tuned on a small amount of task-specific data and perform well, instead of needing a full training run for each new application.

This is the architectural property that reduced the cold start problem in natural language processing around 2020. In robotics, the equivalent shift is underway. The cold start problem is not yet eliminated, but it is being structurally reduced.

2. Vision-Language-Action (VLA) model

A VLA takes three inputs: a camera feed showing what the robot sees, a natural-language instruction describing what to do, and the robot’s current sensor state. It produces one output: the specific physical action to take next, expressed as motor commands, joint angles, or gripper positions.

VLAs extend foundation models into the physical world by adding that action output layer. A robot controlled by a VLA doesn’t require hand-coded rules for every situation. It reads the scene, parses the instruction, and reasons about how to move.

A VLA-controlled robot is like an experienced farmhand who has worked across dozens of different properties and crops and can read a new field after a short briefing, versus one who only ever trained on a single farm and needs months of retraining when anything changes.

The key property is that VLAs generalise across tasks. Physical Intelligence’s π0 model, not an agricultural application but a clear demonstration of what this architecture can do, was trained on data from seven different robot configurations across 68 tasks. The intelligence it encodes can generalise across hardware types and be fine-tuned to new manipulation tasks with far less task-specific data than training from scratch.

3. World foundation model

A big, persistent obstacle in training physical robots is that real-world data is expensive and slow to collect. In agriculture, it is also seasonal: you cannot train a strawberry-picking robot in January.

Cosmos, trained on 20 million hours of real-world video comprising 9,000 trillion tokens, can generate photorealistic synthetic environments for robot training. It is like a flight simulator for farming: instead of waiting for the season to collect real training data, you build the field in software and run thousands of synthetic harvests.

The goal is to compress the gap between simulation and reality enough that robots trained in virtual environments transfer reliably to the field. Danny Bernstein, CEO of The Reservoir, an agrifood robotics investor and startup incubator, described one operational signal I found very interesting: “sim-first development” is allowing teams to “iterate in production, not just in labs, and that changes what’s possible in 2026.”

Companies building on synthetic training environments are not constrained to one training cycle per year, which has historically been one of agriculture’s hardest structural limits on model development speed.

The question that separates real Physical AI from the rebrand is whether the intelligence can transfer

Before getting into specific companies, it helps to be clear about what Physical AI actually covers in agriculture, because the label is doing a lot of work across three different categories.

The first is manipulation robotics. These are systems that physically interact with crops through picking, laser weeding, or precision intervention at the plant level. This is the category where VLA models are most directly relevant.

The second is autonomous field vehicles. Tractors and spray platforms that navigate autonomously but don’t perform dexterous manipulation. Their AI architecture is closer to autonomous vehicle technology, combining GPS/RTK positioning, obstacle detection, and path planning.

The third is AI-guided precision application. Computer vision systems that identify a target (weeds, diseases, nutrient deficiencies) and trigger a response.

All three carry the Physical AI label. The cold-start argument applies most acutely to manipulation robotics, and commercially deployed VLA-based systems in agriculture are still early.

The test for whether a company is participating in the architectural shift or borrowing the vocabulary is: are they building intelligence that generalises across crops, contexts, and geographies, or building task-specific models that require restarting from scratch for each new application? The former inherits the generalisability that foundation models provide. The latter inherits the cold-start constraints that plagued Wave 2 ag robotics.

By that test, Carbon Robotics’ announcement in February is one of the more instructive examples of agricultural Physical AI to date. The company launched what it calls the Large Plant Model (LPM), which it describes as a foundation model trained on 150 million labelled plant images across crops, weeds, soil types, climates, and growth stages worldwide.

Rather than training a separate model for each new crop context, the LPM provides a base that Carbon says any new field configuration can be added to using two to three reference images, in minutes rather than weeks of data collection. Carbon describes it as “the foundation for Carbon AI, the decision-making brain operating across all of Carbon Robotics products.”

The cold start problem it is claiming to have resolved is specifically at the plant recognition layer: the system generalises across plant types rather than being retrained per crop. That is a meaningful shift from Wave 2 architecture.

The companies passing the architecture test are still a limited subset of the full field now using “Physical AI” in their positioning. Most agricultural robots in commercial deployment today were built on Wave 2 architecture: task-specific models, one crop, one context.

Commercially deployed VLA-based systems in agriculture don’t yet exist at a meaningful scale. Carbon’s LPM is one of the most credible examples of foundation-model thinking applied to an agricultural perception task.

The next proof point will likely come from manipulation robotics, and from harvesting specifically, because that is the category where task-specific architecture struggled and where the transfer properties of foundation models change the economic math most significantly.

I think there is a reasonable case that agriculture may be structurally harder than the domains where foundation models have delivered results so far. Outdoor growing environments are much more variable than warehouses or factory floors. The sim-to-real gap, the difference between what a model learns in simulation and how it performs in an actual field, remains a challenge.

Seasonal data constraints mean that even a well-designed foundation model may need several growing cycles to accumulate enough real-world fine-tuning data to be reliable. And the mechanical challenges of dexterous manipulation in unstructured environments, soft fruit, variable canopy, unpredictable terrain, have not gone away just because the perception layer has improved.

The categories reaching commercial scale show where Physical AI gains traction next

The investment data from 2024 and 2025 tells a useful story about which categories of agricultural robotics have reached commercial traction, and what they have in common.

Weeding and AI-targeted spraying lead the field. Carbon Robotics raised a $70 million Series D in October 2024, with more than 100 LaserWeeders deployed in customers’ hands. Ecorobotix, the Swiss AI-targeted micro-spraying company, raised $150 million across its Series C and D rounds in 2024 and 2025 and is scaling commercial operations globally. Its systems cut herbicide volumes by up to 95% through AI-targeted application rather than blanket coverage. John Deere’s See & Spray system offers the most instructive scale data in the category: by 2025, it was operating across more than five million acres, achieving herbicide reductions of close to 50% on average according to Deere’s own figures.

What these categories share is a favourable task structure. The decision the robot makes is narrow and often binary at the plant level: weed or crop, spray or skip. The outcome is directly measurable in input savings. And the task is repeatable across field conditions without requiring the kind of open-ended dexterity. That combination of narrow decision, measurable outcome, and repeatable task is the recipe that has translated agricultural robotics into verified commercial ROI.

Harvesting has not yet followed the same trajectory, and I think the reason is structural. It combines perception under variable field conditions, delicate manipulation, throughput requirements, seasonal data bottlenecks, and high reliability expectations in a labour-intensive workflow.

Three key takeaways

1. Transfer architecture is the filter that separates the shift from the rebrand

“Physical AI” will shortly be on the deck slide of every ag robot company with a camera and a motor. The terminology is not a useful filter. The question that cuts through is architectural: what happens when you move this system to a new variety in a new geography?

If the answer involves months of data collection and retraining, that is Wave 2 architecture with a new label. If the system can be fine-tuned on a small dataset and deployed in days, that is a fundamentally different scaling proposition with different economics.

2. Weeding’s commercial traction points to where the next category breaks through

Maarten Goossens, Founding Partner at Anterra Capital, identified the critical transition in a recent AgFunder investor survey: AI is moving “from models to applications embedded in workflows.” Agrifood, he argues, is “one of the largest real economy opportunity sets where workflows are still under-digitised, so ROI is unusually tangible.”

That tangibility is what made weeding and precision spraying the first categories to reach commercial scale, and it offers a blueprint for founders working in adjacent areas.

The categories closest to the same kind of breakout share specific structural properties: a narrow, well-defined decision at the plant or field level, an outcome that can be measured directly in input cost or yield terms, and a task that repeats across growing conditions. Harvest timing assessment, disease identification, and targeted nutrient application each have paths to that structure.

3. The data flywheel is the asset that compounds, and the robot is the collection mechanism

Carbon Robotics’ Large Plant Model, trained on 150 million plant images and growing continuously through its deployed fleet, is a useful frame for thinking about where durable competitive advantage sits. Deployed systems collect labelled field data, which improves the model, and better models make future deployments faster and more capable.

This flywheel logic is more applicable to agrifood because agricultural data is hard to get, seasonal in nature, and highly contextual. Companies that own large, clean, well-labelled agricultural datasets hold the fine-tuning substrate that new entrants will struggle to replicate from scratch.

The underlying constraint is real: agricultural data remains sparse, fragmented, and poorly digitised across most of the sector. AI is only as good as what it is trained on, and a model built on incomplete or noisy data compounds those limitations at scale. The durable competitive question for this category is which company owns the data that makes the next generation of models work.

If you found value in this newsletter, consider sharing it with a friend who might benefit from it!

Or, if someone forwarded this to you, consider subscribing.

Disclaimer: The views and opinions expressed in this newsletter are my own and do not necessarily reflect those of my employer, affiliates, or any organisations I am associated with.